Fundamental Flaws in Meta-Analytical Review of Social Media Experiments

Ferguson's meta-analysis obscures social media impacts on mental health because it is based on an invalid design and erroneous data.

Recently, psychologist Chris Ferguson published a ‘meta-analytic review‘ of social media experiments in which his primary conclusion was that experimental evidence undermines the notion that “reductions in social media time would improve adolescent mental health” and in which he declared:

Taken at surface value, mean effect sizes are no different from zero. Put very directly, this undermines causal claims by some scholars (e.g., Haidt, 2020; Twenge, 2020) that reductions in social media time would improve adolescent mental health.

Ferguson then proceeded to publicly announce that his study shows that “reducing social media time has NO impact on mental health” (sic).

These conclusions are demonstrably contradicted by the very data and results selected and calculated by Ferguson himself for his review.

Furthermore, Ferguson’s meta-analysis design is fundamentally invalid and his methodology is flawed, erroneous, and severely biased.

False Conclusions

By 2023, there were at least a dozen published studies investigating experimental impacts of reductions in the time spent using social media on depression or anxiety; these experiments typically lasted for 1 to 3 weeks and the participants were usually young adults, often college students.

The results from these studies were overwhelmingly in support of SM use reductions benefiting mental health.

Update August 2024: at this point there are at least 17 published studies investigating experimental impacts of reductions in the time spent using social media on depression or anxiety; and the results are still overwhelmingly in favor of beneficial effects on MH (I’ll add a link once a more formal analysis is available).

Ferguson could have acknowledged these results but express skepticism about the reliability of the studies. Ferguson also could have, as I did back in 2023, ask if these MH benefits would last if SM reduction continued for months rather than just weeks.

Instead, Ferguson published a ‘review’ that withheld from the public any information about the SM time reduction impacts on depression and anxiety.

Instead, Ferguson published a ‘review’ that repeatedly but falsely implied that the experiments revealed there were no beneficial impacts of SM time reductions on depression and anxiety. Ferguson misdirects the public in this manner persistently within his review, and even the title as well as the

Keywords: social media, mental health, depression, anxiety

information displayed at start of the review do mislead the public to think the review is about impacts on genuine MH disorders like depression and anxiety.

As we will see, however, even the ‘well-being’ results of the studies selected by Ferguson and the ‘composite’ effect sizes determined by Ferguson can be shown to provide evidence of impacts on mental health as well as, in multi-week field experiments, on aspects of well-being in general — but only once the outcomes measured and the duration of the experimental intervention are taken into account (something that Ferguson refused to do but we will).

With proper analysis, it turns out that it is only in the short-term field experiments that do not measure mental health that social media reductions appear to be ‘detrimental’ — an indication of withdrawal effects.

Imagine that usage of drug X is associated with doubled risks of clinical depression and that experiments show that a week or more of abstinence from drug X improves mental health but that during the first week it makes participant feel unhappy and frustrated due to withdrawal effects (but happier and content later).

It would be absurd to misuse the adverse effects on momentary moods during the first week to declare that abstinence from drug X has no effects on mental health.

It would be equally absurd to declare that experimental results undermine theories that abstinence from Drug X will improve mental health.

And yet this is essentially what Ferguson does — in a manner hidden from the public — in order to undermine concerns about social media impacts on the mental health of adolescents.

Note that Haidt and Twenge hold that youth have become psychologically dependent on SM and therefore both Haidt and Twenge predict that short-term reductions in SM use among youth will produce momentary declines in some aspects of well-being due to withdrawal effects.

The irony here is that Ferguson misuses the experimental confirmation of these predictions by Haidt and Twenge to declare — while withholding any information about these withdrawal effect predictions by Haidt and Twenge — that the results actually undermine their theories! As a joke this would have been delightful, but there is no indication that Ferguson meant his paper as a spoof.

Furthermore, while the absurd misinterpretation of withdrawal effects as evidence against Haidt and Twenge — and the related conflation of MH disorders with various aspects of well-being — is particularly egregious, there are many other flaws and errors in Ferguson’s meta-analytic review.

To mislead the public as much as he did, Ferguson also had to set up a fundamentally invalid ‘random effects’ meta-analysis that relied on ill-defined ‘composite well-being outcomes’ that obscured well-defined depression and anxiety effects, include several studies that violated his own criteria (and fail to include several studies that did fit his criteria), assign indefensible effect sizes to several studies, and then misinterpret statistical results of his (already invalid) meta-analysis.

Update August 2024: Ferguson recently confirmed that he used the sample size values from his OSF table for his meta-analysis; these sizes are also erroneous, with 3 particularly large errors being Przybylski (600 instead of 297), Lepp (80/40), and the Kleefield dissertation (82/53); in all these cases Ferguson assigned a much larger sample size to a study with a strongly negative effect size, biasing the meta-analysis toward Ferguson’s views (just as each of Ferguson’s indefensible effect size assignments and improper study inclusions also biased the results toward Ferguson’s views).

Most of all, however, Ferguson had to persistently withhold essential results and information — without these crucial omissions, the public would have immediately doubted Ferguson’s conclusions.

In particular, Ferguson had to withhold the effect sizes he had to calculate for depression and anxiety outcomes in order to the determine the composite well-being outcomes.

Even with this information hidden, however, we will see the evidence is damning to Ferguson once we split his own ‘composite’ effect sizes according to whether a MH outcome (depression or anxiety) was included: for the SM use reduction field experiments that included a MH outcome, the average Ferguson effect size is d = +0.27, indicating MH benefits; but for the SM use reduction field experiments that did not include a MH outcome, the average Ferguson effect size is d = -0.12, indicating ‘well-being’ harm.

Another damning evidence appears once we split Ferguson’s effect sizes according to the duration of the SM use reduction (d = -0.18 for single day reductions, d = +0.02 for 1-week reductions, and d = +0.20 for multi-week reductions).

These results of course immediately indicate that (unsurprisingly) both the presence of a MH outcome and the duration of the reduction are crucial moderators — and that SM use reduction impacts on MH are obscured and overwhelmed in Ferguson’s invalid analysis, the results of which Ferguson then misinterprets as undermining the very notion that SM use reductions can benefit MH.

In short, Ferguson’s paper stands and falls on de facto censorship of evidence.

Let us now examine the various components of Ferguson’s paper in more detail.

Primary Assertions by Ferguson

The review was published in Psychology of Popular Media — see Do Social Media Experiments Prove a Link With Mental Health: A Methodological and Meta-Analytic Review.

Note: Ferguson has also placed the paper online.

In its Public Policy Relevance Statement, Ferguson declares that his meta-analysis “undermines” the notion that “reductions in social media time would improve adolescent mental health.”

In the Conclusion of his paper, Ferguson states that statistical insignificance of his meta-analytical result “undermines” the belief of Jonathan Haidt and Jean Twenge that reductions in social media usage would benefit adolescent mental health:

“Further meta-analytic evidence suggests that, even taken at face value, such experiments provide little evidence for effects. Put very directly, this undermines causal claims by some scholars and politicians that reductions in social media time would improve adolescent mental health. Thus, appeals to social media experiments may have misled more than informed public policy related to technology use.”

Indeed Ferguson has announced that his study “finds that reducing social media time has NO impact on mental health.”

Note: see https://osf.io/27dx6 for Ferguson’s list of studies and see https://osf.io/jcha2 for the list of effect sizes assigned by Ferguson to each study.

Categories of Experiments

To understand the incompatibility of experimental methods among the 27 studies included by Ferguson, we can recognize four basic categories:

Lab Experiments that last only 10 - 30 minutes. In these the ‘treatment’ is typically social media exposure such as requiring high school students to look at their own Facebook or Instagram page for 10 minutes, which resulted in momentary increase of self-esteem (Ward 2017).

Single-day Abstinence experiments that typically measure some aspect of well-being affected by withdrawal symptoms — such as in Przybylski 2022 where students reported lower Day Satisfaction at the end of the abstinence day.

One Week Reduction experiments that typically show lingering withdrawal symptoms coupled with mental health improvements when these are measured (see below).

Multi-Week Reduction experiments that tend to produce mental health improvements without any withdrawal symptoms (no well-being declines).

Another reason for incompatibility is the wide range of outcomes measured by these studies, ranging from asking student How good or bad was today? to using validated clinical measures of depression such as PHQ-9.

To complicate matters, two of 27 studies are not social media time experiments as required by Ferguson’s own selection criteria. Furthermore, two reduction studies admit to have fundamentally failed to manipulate social media use as intended, which also should have disqualified these studies from Ferguson’s meta-analysis.

Conducting a fundamentally flawed meta-analysis that produces a single effect size to reveal a supposed ‘average impact’ of all these incompatible experiments is scientifically invalid. Misrepresenting this result as the effects of social media reductions on mental health is severe misinformation.

Experimental Evidence

We will now show that the very effect sizes determined by Ferguson, from the studies selected by Ferguson, completely contradict his conclusions.

Warning: Ferguson does not clearly define how he determines the effect sizes for his meta-analysis.

Warning: The effect size assigned by Ferguson to each study depends on which outcomes Ferguson decided to use — but Ferguson does not reveal which aspects of well-being he chose to be included in the ‘average’ WB effect size for each study. Needless to say, Ferguson therefore also never revealed the effect sizes he calculated for each component of the overall effect size for each study.

Example: Consider a study measured 6 outcomes that some might reasonably consider mental well-being: positive affect, anxiety, self-esteem, FoMO (‘fear of missing out’), loneliness, and life satisfaction. Ferguson does not reveal which of the six WB outcomes he included in determining the overall ‘effect size’ that he assigned to the study.

Warning: Some of the effect size determinations by Ferguson are clearly indefensible or erroneous (See Erroneous Effect Size Determinations below).

Warning: Some of the studies are improperly included (violating Ferguson’s own criteria) while others were improperly excluded. (See Improper Inclusion and Exclusion of Studies below).

We will ignore these problems for now as the data contradicts Ferguson’s conclusions despite the fact that the above errors strongly bias the meta-analysis in favor of Ferguson’s conclusions. Indeed the intent of this section is to show that even if we do accept the effect sizes assigned by Ferguson as correct and unbiased, his evidence still contradicts his conclusions.

Before we start, let us note that if medication X harms males but benefits females, it would be absurd to combine these impacts within a meta-analysis in order to proclaim medication X has no effect.

In general, if there’s robust evidence that experimental results differ radically depending on some relevant factor such as gender or duration of treatment or type of outcome, it is invalid to proceed with a ‘random-effects model’ meta-analysis.

Mental Health versus Well-Being Outcomes

Let us first split the 21 field studies into the 9 that include a measure of a genuine mental health outcome like symptoms of depression or anxiety and the 12 that ignore such mental health outcomes (measuring only aspects of ‘well-being’ like feeling satisfied or feeling lonely).

Note: We count Gajdick as a WB study because the ‘state anxiety’ measure is not a valid indicator of a mental disorder, as it inquires only about momentary feelings during a single day.

Note: we leave out the 6 lab experiments that expose participants briefly (7-30 minutes) to some SM use, as it makes no sense to mix these with field experiments that last often weeks and allow the comparison of SM reduction groups with control groups that continue to use SM as usual.

Using the effect sizes determined by Ferguson himself, we see a radical difference in the average effect size of each group:

The 9 experiments that include a MH outcome: d = +0.22

The 12 experiments that do not include any MH outcome: d = -0.03

This is clear evidence that Ferguson misuses well-being outcomes to dilute effects on mental health.

Remember that the title of Ferguson’s paper is Do Social Media Experiments Prove a Link With Mental Health — yet Ferguson never informs the public that the experimental outcomes differ radically depending on whether a genuine mental health outcome is actually measured in the study.

Instead, Ferguson declares that experimental evidence undermines concerns of scholars like Haidt and Twenge that heavy social media use harms adolescent mental health. Not only that, Ferguson outright announces that experimental evidence shows that “reducing social media time has NO impact on mental health.”

Shorter versus Longer Duration

Let us now split the 21 field experiments according to the duration of ‘treatment’ (SM use reduction).

Note: We exclude the six lab studies (average d = +0.10) as these were all social media exposure experiments lasting 5 to 30 minutes. We include the 5-day experiment (Vanman 2018) in the One Week category.

The average effect size once again reveal radical differences:

Three Single-day experiments: d = -0.18

Eight One Week experiments: d = +0.02

Ten Multi-Week experiments: d = +0.20

The pattern illustrates how Ferguson misuses temporary withdrawal effects on well-being in short-term experiments to counter beneficial impacts of longer interventions.

Indeed multi-week experiments show substantial improvements.

Duration of experiment and types of outcome measurement (well-being vs mental health) explain much of the variation in effect sizes assigned by Ferguson. This alone invalidates Ferguson’s ‘random-effects’ meta-analysis — the appropriate methodology would be to meta-analyze subgroups or to perform a meta-regression.

Note: See A basic introduction to fixed-effect and random-effects models for meta-analysis.

Duration and Outcome

Let us now consider the influence of both duration and outcome; so we split the studies into five groups:

4 One Week MH: d = +0.25

7 Multi-Week MH: d = +0.27

3 Single Day WB: d = -0.18

4 One Week WB: d = -0.21

3 Multi-Week WB: d = +0.04

This reveals that what skews the meta-analysis toward zero is the 7 shortest experiments that ignore mental health but focus on well-being measures likely to be influenced by withdrawal effects.

To recapitulate: in view of the effect sizes determined by Ferguson himself, his assertions about the experiment results showing zero impacts on mental health and undermining concerns about social media are blatantly counterfactual.

Six Fundamental Flaws (Statistical Insignificance)

Let us for a moment ignore the fundamental invalidity of Ferguson’s meta-analytical design.

There are six primary reasons why Ferguson's meta-analysis produced a statistically insignificant result:

1) Ferguson dilutes mental-health effects by including general ‘well-being’1 outcomes; for example, in a study that measures depression using a clinically validated scale, Ferguson may create a composite ‘WB’ effect size that conflates this MH outcome with the outcomes of WB aspects such as mood, life satisfaction, or loneliness. And yet Ferguson misrepresents his analysis as measuring MH outcomes and its results as undermining concerns of scholars like Haidt & Twenge about SM impacts on mental health outcomes such as depression and anxiety.

2) Ferguson includes experiments that do not measure any mental health outcome at all — in fact only 11 experiments measure MH2 at all (and then only as one component of the composite effect size) while all 19 experiments measure only aspects of general well-being.34 For example, Ferguson included Deters 2013, in which the only outcome was loneliness.

2) Ferguson’s meta-analysis interprets withdrawal symptoms5 as evidence that social media use reductions are harmful — and that SM are beneficial — and so attenuates the overall effect size.

3) There are two indefensible effect size determinations as well as two improperly included experiments that violate Ferguson’s own inclusion criteria and two improperly included experiments in which digital screen time of the SMU reduction participants actually increased.

4) A number of studies that were improperly excluded. Each one of these mistakes biased the meta-analysis in support of Ferguson’s views. Correcting these errors (or even one of the large ones) would flip the result of Ferguson’s meta-analysis to statistical significance, removing a key premise of his main arguments.6

To better understand the severity of some of the problems, note that, out of the 27 effect sizes assigned by Ferguson, the strongest one to support his conclusions is from a study7 whose authors themselves agree the effect size should be nearly the opposite — Ferguson essentially reversed the sign of the effect.8

Note also that the confidence interval in Ferguson’s meta-analytical result barely included zero and so all the flawed components mentioned above — the obfuscation of mental health impacts by general well-being outcomes, the misuse of withdrawal symptoms to counter mental health improvements, the study selection mistakes, and the effect size assignment errors — were required for Ferguson to obtain a statistically insignificant outcome.

In this critique, I will concentrate only on the major problems specific to the meta-analysis. There are also a number of other problems with the review, such as Ferguson’s misinterpretations of statistical insignificance and effect sizes.

Unfortunately, the publication of Ferguson’s meta-analytical review in a prominent journal and its coverage in the news media9 as well as its promotion by Ferguson and others ensures the erroneous review will be used to spread misinformation about adolescent mental health.

Erroneous Data

Although the design of Ferguson’s meta-analysis is severely flawed and fundamentally invalid, it would not have produced a ‘null’ result (a confidence interval that includes zero) had it been applied to accurate data.

Ferguson’s meta-analysis produced a statistically insignificant result only because Ferguson provided it with erroneous data.

Ferguson does so with as follows:

Two effect sizes are clearly erroneous.

Two effect sizes were improperly included.

At least six experiments indicating social media harms were not included.

Note that Ferguson assigns negative effect size to indicate that reducing SM harms MH or that exposure to SM benefits MH. A negative effect size in this scheme therefore indicates that SM is good for MH.

Each of the above mistakes biases the meta-analysis toward the negative effect size (signifying that social media reductions are harmful — and so SM itself is beneficial).

Improper Inclusion and Exclusion of Studies

Ferguson included two studies that violate his own criteria for inclusion: neither Gajdics 2021 (d=-0.364) nor Deters 2013 (d = -0.207) are experiments manipulating time spent on social media (see Appendix: Study Inclusion and Exclusion for details).

Note: Ferguson reportedly performed his search for the studies in the Fall of 2023.

Ferguson failed to include a number of SM reduction studies that indicated social media harm, such as Mosquera 2019, Reed 2023, Turel 2018, or Graham 2021.10

Ferguson also failed to include Engeln 2020 — a social media exposure study similar to the other laboratory studies included by Ferguson. The study found significant well-being declines after exposure to SM.

Note: this is not meant to agree in any way that the conflation of lab experiments with SMU reduction field experiments makes any sense what-so-ever; my criticism simply concerns the inconsistency regarding selection criteria.

Therefore two substantially negative effect sizes should not have been included while at least five positive effect sizes were missing from the data. Note that it is beyond the scope of this critique to locate all the missed studies.

Erroneous Effect Size Determinations

Comparing effect sizes assigned by Ferguson with information in the Abstracts of the studies raises red flags for two experiments: Brailovskaia 2022 (d = 0) and Lepp 2022 (d = -0.365); subsequent examination of the full text confirmed that the effect size assignments by Ferguson are indeed erroneous.11

Brailovskaia 2022 (d = 0) found substantially positive effects on all well-being outcomes — the assignment of d = 0 by Ferguson is a mystery (perhaps he miscalculated).

Lepp 2022 (d = -0.365) was assigned the most negative effect size by Ferguson of all the 27 experimental results on his list, and yet both the data and the conclusions of the authors indicate a very substantial positive effect size.

After I sent email to Ferguson regarding the Lepp 2022 study, Andrew Lepp of Kent University, whom I copied, replied to thank me “for the accurate description of our referenced study” and urged Ferguson to answer my inquiry. Ferguson replied solely to Lepp, stating: “I’m comfortable with my interpretation of your data as related to the specific questions of the meta”.12

Therefore the pinnacle of Ferguson’s evidence that social media does no harm comes from a study whose authors concluded the opposite and later agreed with my criticism — Ferguson in essence flipped the sign of the effect and refuses to admit any error.

For a detailed discussion of each of the erroneous effect sizes, including tables and graphs, see Appendix: Erroneous Effect Size Determinations.

Impact of Data Errors on Meta-Analysis

Since Ferguson’s meta-analysis produced a confidence interval that barely includes zero, correcting any one of the nine major data errors would likely produce a statistically significant finding.13

For example, correcting Lepp 2022 from d = -0.365 to a reasonable d = 0.250 increases the average effect from d = +0.084 to d =+0.107.14

This illustrates the sensitivity of the average to even a single major error in the determination of effect size by Ferguson.

If we also assign reasonable estimate15 of d = 0.1 to Brailovskaia 2022 and remove the two studies that violate Ferguson’s criteria, the average should increases to approximately d = +0.14.

In my estimate, correcting the data errors and adding all the missing studies could easily double the effect size produced by Ferguson’s meta-analysis.

Top 10 Studies by Negative Effect Size

To better understand the problematic state of evidence that supports Ferguson’s conclusion that reducing social media use does not benefit mental health, let us briefly look at each of the top 10 studies per the negative effect size assigned by Ferguson:

Lepp 2022 -0.365: This is the lab experiment where Ferguson essentially flipped the sign of the largest effect size.

Gajdics 2022 -0.364: This is a single-day no phones in school experiment, not a time spent on social media study (as required by the criteria set by Ferguson). Inclusion of Gajdics 2022 would require inclusion of similar experiments, like Brailovskaia 2023.

Vally 2019 -0.361: One week of sudden abstinence produced declines in aspects of well-being (withdrawal effects); MH was not measured.

Ward dissertation 2017 -0.298: SM lab experiment (only 22 participants in the control group) where H.S. students spending 10 minutes looking at their own Facebook page experienced an increase in self-esteem but “this effect disappeared when controlling for gender.” No substantial effect on depression.

Kleefield dissertation 2021 -0.277: a very small experiment (only 27 participants in the control group) where one week of social media reduction led to lower self-esteem and no substantial change in anxiety and depression.

Deters 2013 -0.207: This study manipulates Facebook status updates, not time spent on social media (as required by the criteria set by Ferguson). MH was not measured.

Przybylski 2021 -0.152: One day of sudden social media abstention lowered Day Satisfaction (withdrawal effects); MH was not measured.

Collis 2022 -0.138: In this failed semester-long experiment, students migrated from Snapchat to WhatsApp (not counted as SM by authors) and digital screen time in treatment increased. MH was not measured.

Vanman 2018 -0.135: Those who abstained from Facebook for 5 days experienced reduced physical stress (lower cortisol) but also reduced satisfaction16 —MH was not measured.

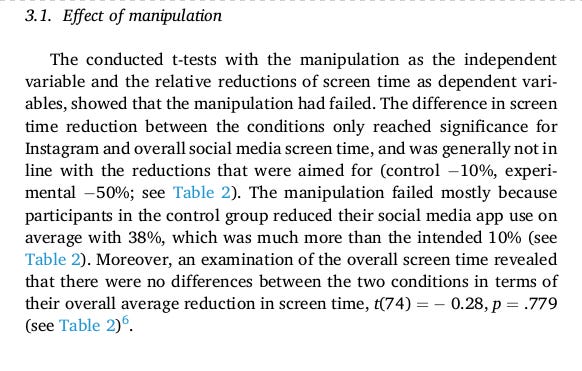

van Wezel 2021 -0.123: Social media reduction experiment that failed because the control group unexpectedly also reduced social media use greatly (“thus dismantling our intended screen time manipulation”); furthermore, digital screen time declined more in control than in treatment. MH was not measured.

Conclusion

Ferguson’s meta-analytical result relies on a fundamentally flawed methodology and highly erroneous data and therefore lacks scientific validity.

Ferguson’s review, however, should not be merely ignored, as its publication in a prominent journal elevates it to a potent source of misinformation that can be used to dismiss concerns about the impact of social media on adolescent mental health.

Appendix

Study Inclusion and Exclusion

Ferguson declared that “studies must examine time spent on social media use” and yet he included two studies that violate this requirement.

Gajdics 2021 (d=-0.364) is not a true social media experiment because it is a one day no phone in high school experiment. The effects of what Gajdics refers to as ‘nomophobia’ (NO MObile PHone PhoBIA) are no doubt much stronger than any impacts related to social media use during school hours. In other words the effects are unlikely to be primarily about social media but about phone abstention and the resulting nomophobia.

When Gajdics is not excluded, there is no justification for not including other phone abstinence experiments, such Brailovskaia 2023. Exceptions for some such studies impairs the integrity of the selection process.

Since Gajdics 2021 has the second strongest negative d in the meta-analysis, its proper exclusion would have had considerable impact. Note that Gajdics portrays his findings as confirmation of ‘nomophobia’ — psychological dependence on phones.

In Deters 2013 (d = -0.207) the treatment is to only increase frequency of updating Facebook status for a week, the result being a decline in feelings of loneliness. There is, however, no evidence whatsoever in the study that time spent on social media increased.

Studies that were missed include Mosquera 2019, which found significant declines in depression risk after one week of Facebook abstention (and no declines in well-being), Reed 2023, which found significant declines in depression risk after 3 months of reduced social media use, Turel 2018, which found reductions in perceived stress following SM abstinence, and Graham 2021, which found improvements in wellbeing after a 1 week SM abstinence.

The exclusion of Mosquera 2019, a study that found significant decrease in depression after one week of Facebook abstention, is all the more puzzling given and that it has been covered in the media (see College students who go off Facebook for a week consume less news and report being less depressed).

Engeln 2020 is a social media exposure study similar to the other laboratory studies included by Ferguson and yet were excluded for no apparent reason.

Reed 2023, which found significant declines in depression risk after 3 months of reduced social media use, was published in February 2023, before several other studies on Ferguson’s list.

Some of the missed studies, such as Mosquera 2019 and Engeln 2020, have long been included in a list of experimental studies maintained by Haidt and Twenge that is well known to Ferguson (see Omission of Major Source below).

Omission of Major Source

Ferguson credits two blog posts as valuable sources that he consulted when searching for experimental studies:

Studies identified by previous commentators (e.g., Hanania, 2023; Smith, 2023) as this was valuable locating studies in other fields such as economics.

N. Smith, however, refers to Hanania for a list of studies, and R. Hanania explicitly states that the studies he mentions are from a list created by Haidt & Twenge:

Jonathan Haidt and Jean Twenge have put together a Google doc that contains all studies they can find relevant to whether social media use harms the mental health of young people.

This list of studies compiled by Haidt & Twenge is, to the best of my knowledge, by far the most complete source available, and it is often referred to by both Haidt and Twenge in their work, which Ferguson criticizes in his review.

Ferguson could have avoided missing several studies had he consulted the Haidt & Twenge list. Ferguson is well aware of this source, and has himself has left comments on it from 2019 up to 2023.

It is remarkable that Ferguson praises two blog posts as valuable sources when both posts depend entirely on the list compiled by Haidt & Twenge, which Ferguson simply ignores.17

Erroneous Effect Size Determinations

Comparing the Ferguson effect size with information in the Abstracts of the studies raises red flags for two experiments: Brailovskaia 2022 and Lepp 2022; subsequent examination of the full text confirmed that the effect size assignments by Ferguson are indefensible.

Study #1: Incorrect Effect Size for Lepp 2022

The effect size that Ferguson assigned to Lepp 2022 is the highest negative impact of all the studies on his list: d = -0.365.

The Lepp study was a social media exposure experiment and the authors concluded it provided evidence that such exposure was detrimental to well being:

Results demonstrated that social media use for 30 min caused a significant decrease in positive affect.

So how is it possible that this experiment received the largest negative effect size among all the studies, indicating that social media exposure benefited participants greatly?

The only plausible answer is the linguistic misuse by Ferguson of the term ‘control’ as used by the authors when describing their experiment:

All participants completed the following 30-minute activity conditions: treadmill walking, self-selected schoolwork (i.e., studying), social media use, and a control condition where participants sat in a quiet room (i.e., do nothing).

The problem is that the ‘control condition’ was not intended to mean what control typically means in experiments, that is a test for a placebo (or nocebo) effect. This is clear from the following hypothesis stated by the authors:

Indeed the college students who were required to sit in class and literally do nothing for 30 minutes were understandably very irritated by this and it showed up on the post-test results:

So positive affect scores fell greatly after social media exposure, but they fell similarly after imposed inactivity. If Ferguson treated the control group as a true control condition, the deleterious impact of social media exposure on positive affect was ‘canceled’ by the similar impact of imposed inactivity.

The deleterious impact of imposed inactivity was even more pronounced for negative affect (some very angry students):

Social media had little impact on negative affect, but if Ferguson subtracted the deleterious impact of ‘imposed inactivity’ from the no effect of social media exposure, the resulting effect size would indicate substantial (but illusory) benefits of social media exposure.

Needless to say, the authors never consider the ’true’ impact of social media exposure to be one where the imposed inactivity effects are subtracted as if they were a placebo (nocebo) effect. They clearly did not intend to use the term ‘control’ to imply such a misuse, otherwise they could not have concluded that social media use is bad for students and recommend in their Conclusion that students should be minimizing social media use.

After I emailed Ferguson with the Lepp 2022 effect size criticism above and asked if there will be a correction, Andrew Lepp of Kent University, whom I copied, replied to thank me “for the accurate description of our referenced study” and urged Ferguson to answer my inquiry. Ferguson replied solely to Lepp, stating: “I’m comfortable with my interpretation of your data as related to the specific questions of the meta”.

Thus out of the 27 studies examined by Ferguson, the one providing the supposedly strongest evidence against harmful impacts of social media (per Ferguson’s effect sizes) comes from a study whose authors concluded the opposite and dispute Ferguson’s effect size determination.

Study #2: Incorrect Effect Size for Brailovskaia 2022

The effect size for Brailovskaia 2022 on Ferguson’s list is d = 0.

In reality, the authors report numerous benefits to well-being:

Results In the experimental groups, (addictive) SMU, depression symptoms, and COVID-19 burden decreased, while physical activity, life satisfaction, and subjective happiness increased.

There is no indication of any other well-being measures that could counter the ones mentioned by the authors (even smoking declined in the social media reduction group).

The benefits of social media reduction remained even 6 month after the experiment ended:

I’m at a loss as to how this could translate to a d = 0 result unless this effect size is a typo.

Failed Experiments

Some experiments on Ferguson’s list actually failed to reduce social media use in the treatment group or even to prevent reduction of social media use in the control group, rendering the measure of ‘impact’ dubious if not outright meaningless.

For example, consider the following admission in van Wezel 2021:

This experiment did not even have a true control group where participants would continue social media use as usual, and the attempt by researchers to induce different degrees of reduction between two groups was a complete failure — in fact the ‘control’ group reduced screen time more than the ‘treatment’ group.

Collis 2022 is another experiment gone wrong:

In the experiment, we randomly allocate half of the sample to a treatment condition in which social media usage (Facebook, Instagram, and Snapchat) is restricted to a maximum of 10 minutes per day. We find that participants in the treatment group substitute social media for instant messaging and do not decrease their total time spent on digital devices.

In fact students in the treatment group increased their digital tech use:

Remarkably, although students in the treatment group significantly reduced their social media activities, their overall digital activities overall are not affected but, in fact, exceed those of the control group in block 2 (t-test, p = 0.026). This result indicates that students substituted or even overcompensated their social media usage with other activities.

The authors further specify: we see that participants in the treatment group substituted their use of social media services for instant messaging apps (e.g. WhatsApp).

The authors do not justify the categorization of Snapchat as social media even though Snapchat is routinely described as a multimedia instant messaging app. Nor do the authors justify exclusion of WhatsApp from social media, even though it is routinely used by students for communication within large groups.

Omission of a Systematic Review

Any proper meta-analysis begins with a systematic review (see Meta-Analysis 101) — such a review would, however, reveal that social media reduction experiments produce declines in depression risk.

Furthermore, a systematic review would have revealed that a single meta-analysis of all the studies Ferguson included is impossible due to incompatible differences in experimental design and measured outcomes.

Arbitrary Methodology of Effect Size Assignment

Besides the two instances of clearly indefensible effect size assignments, Ferguson’s methodology is highly arbitrary.

First, wherever there is only a regression result (such as big F or eta), Ferguson seems to convert it to Cohen’s d without concerns about underlying assumptions about data distribution.

Second, there is no uniformity across studies regarding adjustments for demographics and even other variables, so this is up to the authors, whose reasons may also be rather arbitrary.18 Ferguson seems to ignore such adjustments when both adjusted and unadjusted results are presented.

Third, there is conflation between group differences and regression, which are fundamentally distinct, as is illustrated by Lord’s paradox. Sometimes both results are presented in a study; it is unclear how Ferguson decides which one to select.

Note that in Lambert 2022, Ferguson seems to just compare post-intervention Treatment and Control groups, using Cohen’s formula (so he uses post-treatment SDs for the spooled SD). This can make sense in large RCTs where control and treatment groups are closely matched for not only demographics but also all outcomes of interest. In social media experiments, however, the sample size does not enable such balancing and is seldom attempted. Thus Ferguson’s method sometimes ignores the baseline differences in well-being outcomes between treatment and control groups.

Note also that not all the studies include all the necessary information, allowing only a rough estimate of an effect size. For example, in Vanman 2018 an effect size (Cohen’s d = -0.54) is given for only one out of the four well-being outcomes, with the other three merely being noted as having no significant effect; Ferguson assigned effect size d = -0.54/4 = -0.135, indicating he just presumed the other three to be simply zero.

Misinterpretation of Statistical Results

To reach the conclusion that evidence undermines concerns about social media, the flawed meta-analysis and the erroneous data are not sufficient — Ferguson must also misinterpret the statistical results.

Ferguson does so by misinterpreting lack of statistical significance as evidence that there is no effect.

Ferguson also wrongly dismisses anything below d = 0.21 as too low to be of practical importance, a mistaken view based on misunderstanding rank correlations involving small ordinal scales.19

Misinterpretation of Heterogeneity

Ferguson admits that his meta-analysis suffers from extreme heterogeneity and implies that this is due to the methodological bias of researchers trying to prove social media is harmful when in reality the heterogeneity is the product of Ferguson’s own improper methodology (a potpourri of incompatible experiments and outcomes).

Since high heterogeneity in 'random effects’ meta-analysis tends to widen confidence intervals, it is remarkable that Ferguson’s meta-analysis would still produce a statistically significant result with its CI high above zero had Ferguson fed it correct data.

Sample Size of Experiments

The sample size of experiments (as determined by Ferguson is a strong moderator of the impacts on well-being per the effect size values assigned by Ferguson: out of the 27 studies, the 13 higher-powered experiments produced a far greater average impact (d = 0.15) than the 13 lower-powered studies (d = 0.01).20

This is yet another reason why Ferguson’s ‘random effects’ meta-analysis makes no sense, since the differences in the outcomes between the experiments are quite predictable.

By failing to include sample size in his moderators analysis, which is routine in such examinations of meta-analytical moderators, Ferguson was also able to evade the obvious question as to why a larger sample size is such a strong predictor of beneficial impacts in these experiments.

Misleading Terminology

The title Do Social Media Experiments Prove a Link With Mental Health is incorrect because Ferguson enlarges the scope to aspects of general well-being (that is outcomes such as answers to How good or bad was today?) that often have little to nothing to do with mental health.

Similarly, Table 1 is labeled Meta-Analytic Results of Social Media and Mental Health Outcomes when in reality it is based on well-being outcomes.

Lack of Transparency

Ferguson does not reveal how he calculated the effect size for each study, nor even which outcomes he considers ‘well-being’ — and he never defines how precisely the effect size for a study should be calculated when it is clear which outcomes should be included.

In view of this methodological fuzziness, the very notion of ‘correct’ effect size needs to be viewed in quotation marks to indicate there is no clear definition by Ferguson. The issue is ‘defensible’ versus ‘indefensible’ rather than ‘correct’ versus ‘incorrect’ effect sizes.

Flawed Methodological Review

The ‘meta-analytical review’ is only half of Ferguson’s paper — the other half is a 'methodological review’ that is as erroneous and flawed as the first half, but that is a topic for a separate critique.

Of particular relevance here is that the methodological half of Ferguson’s article essentially dismisses all the experiments as too flawed to provide evidence against social media, and yet Ferguson promotes his meta-analysis as supposedly showing these experiments undermine concerns about social media.

Apr 30 Update: removed the analogy with weight and health.

May 25 Update: a major rewrite to incorporate erroneous effect sizes etc.

June 3 Update: added 3 missing studies.

August Update: several updates and modifications, with focus mainly on studies and data within the review rather than on studies not included in the review (there are too many such missing studies by now, demanding a separate article).

Remarkably, Ferguson never specifies which outcomes he considers mental well-being.

By MH we mean symptoms of disorders such as depression or anxiety — not aspects of WB such as ‘life satisfaction’ or ‘loneliness’ or ‘FoMO’ or ‘affect’ that by themselves do not indicate any mental disorder.

See https://osf.io/27dx6 for Ferguson’s list of studies.

In the Abstract, Ferguson states: […] some commentators are more convinced by experimental studies, wherein experimental groups are asked to refrain from social media use for some length of time, compared to a control group of normal use. This meta-analytic review examines the evidence provided by these studies.

In reality, only 16 of the 27 studies ask participants to reduce SM use.

These are temporary declines in some aspects of well-being such as mood and satisfaction that appear only in short experiments after sudden reduction of social media use and may involve feelings such as craving and frustration, not symptoms of mental health disorders.

The notion of ‘correcting’ an effect size in this context means making an indefensible effect size assignment defensible. Ferguson never defines how to determine the effect sizes and his calculations appear to be a hodgepodge of various methods.

See Study #1: Incorrect Effect Size for Lepp 2022 below.

Note that negative effect sizes in Ferguson’s scheme indicate that reductions of SM use are harmful instead of beneficial. Thus negative effect size assignments by Ferguson support the view that SM is not harmful (and help tilt his meta-analytical result toward zero).

For example, on May 1st, an essay in The New York Times announced that psychologist Christopher J. Ferguson will publish a new meta-analysis “showing no relationship between smartphone use and well-being.”

I do not count Davis 2024 as a selection mistake, as it was published recently.

Once again it needs to be mentioned that the notion of ‘correcting’ an effect size in this context means making an indefensible effect size assignment defensible, as there is no precise definition allowing to always calculate one correct effect size. Some effect size assignments, however, are so clearly wrong as to be indefensible, and that applies to the two discussed in this section.

This is per email communications, including an email from Ferguson to Lepp that was forwarded to me by Lepp.

A meta-analysis assigns different weights to different studies based on standard errors (related to sample sizes), but Ferguson does not reveal the weights. In a ‘random effects’ meta-analysis, however, the weights tend to be closer to each other than in a ‘fixed effects’ meta-analysis. Indeed the average of the effect sizes assigned by Ferguson (d = 0.084) is nearly the same as the meta-analytical weighted average (d = 0.088). For this reason it is sufficient to look at simple averages to estimate influences on the meta-analytical result (or when discussing subgroups and moderators).

Determining an effect size for Lepp depends on deciding between pre-post groups differences and regression — I chose here the rough average of the two methods. Note that disqualifying Lepp 2022 for not having a true control group would require disqualifying also Gajdics 2021 (d = -0.364) and Przybylski 2021 (d = -0.152) as they have no control groups — and doing so would have increased the average effect size even more (d = 0.12).

Not all the studies include all the information required for determining the effect size, so I can at times formulate only rough estimates. Also, the notion of ‘correcting’ an effect size in this context means making an indefensible effect size assignment defensible, as there is no precise definition allowing to always calculate one correct effect size.

Ferguson seems to have ignored the cortisol declines; this is defensible but illustrates the methodological flexibility involved.

Ferguson is aware of the collaborative doc and its list of experimental studies because on August 15 2023 he added a comment to the doc regarding one of the experimental studies (Faulhaber 2023) on the list.

For example Mitev 2021 states: “Age was not a preregistered covariate. We followed recommendations from expert reviewers to control for it.” Also see the footnote on regression in Collis (including selected controls beyond demographics).

Ferguson also asserts: “recent scholarship has found that effect sizes below r = .10 have a very high false positive rate and are, in essence, indistinguishable from statistical noise (Ferguson & Heene, 2021)“ — but the paper he co-wrote provides no evidence of this assertion.

See https://osf.io/jcha2 for Ferguson’s table with sample sizes and effect sizes.

For Collis & Eggers (2022 https://doi.org/10.1371/journal.pone.0272416), Table 6 seems to have the relevant results, albeit perhaps with a subtly different standardization of effect sizes, no? There is an estimated effect on life satisfaction of -0.020 SDs and on mental well-being of -0.018 SDs.