Problematic Effect Size Determinations in Ferguson's Meta-Analysis of Social Media Experiments

Several effect size determinations by Chris Ferguson appear to be indefensible. If corrected, his meta-analysis would produce a statistically significant result, greatly undermining his conclusions.

In his meta-analysis of 27 social media experiments, psychologist Chris Ferguson assigns three effect sizes that are grossly inaccurate (see section Erroneous Effect Size Determinations below for details).

All the three indefensible effect sizes are heavily biased toward the negative and correcting them would substantially increase the average effect size in Ferguson’s meta-analysis as well as comfortably exclude zero from its confidence interval (making the result statistically significant).1

Since Ferguson misinterprets lack of statistical significance as evidence of no effect, the correction of his effect sizes invalidates the premise of his flawed reasoning and of his announcement that his meta-analysis finds that reducing social media time has NO impact on mental health.2

Furthermore, two of the experimental studies selected by Ferguson violate his own stated inclusion criteria, and both studies have a negative effect size. A third study, a massive experiment that found Facebook abstention led to a substantial decline in depression, was inexplicably left out of the list by Ferguson (see section Inclusion and Exclusion of Studies below for details).

A correction of the studies selection would also produce a statistically significant result in Ferguson’s meta-analysis.

Note: In Ferguson’s analysis, when the effect size is positive, it indicates that reducing social media is good for mental health while a negative sign indicates the opposite. Positive effect sizes therefore counter Ferguson’s conclusion that reducing social media produces no benefits for mental health.

Assignment of Effect Sizes

For a meta-analysis to be trustworthy, the assignment of an effect size to each experiment by the reviewer must be trustworthy.

This is especially true when the number of studies examined is small and when the lower bound of the confidence interval of the meta-analytical result is close to zero — meaning a single erroneous or biased assignment of effect size could make a statistically significant finding insignificant (inclusion of zero in the 95% confidence interval), especially when a sign is reversed (e.g. a positive effect is recorded as a negative effect).

This issue is all the more important because Ferguson misinterprets lack of statistical significance as evidence of no effect.

Note: the list of effect sizes assigned by Ferguson is at https://osf.io/jcha2 and the list of studies included by Ferguson is at https://osf.io/27dx6 (contains misspellings and broken links).

Erroneous Effect Size Determinations

Comparing the Ferguson effect size with information in the Abstracts of the studies raises red flags for three experiments: Collis 2022, Brailovskaia 2022, and Lepp 2022; subsequent examination of the full text confirmed that the effect size assignments by Ferguson are indefensible.

Study #1: Incorrect Effect Size for Lepp 2022

The effect size that Ferguson assigned to Lepp 2022 is the highest negative impact of all the studies on his list: d = -0.365.

The Lepp study was a social media exposure experiment and the authors concluded it provided evidence that such exposure was detrimental to well being:

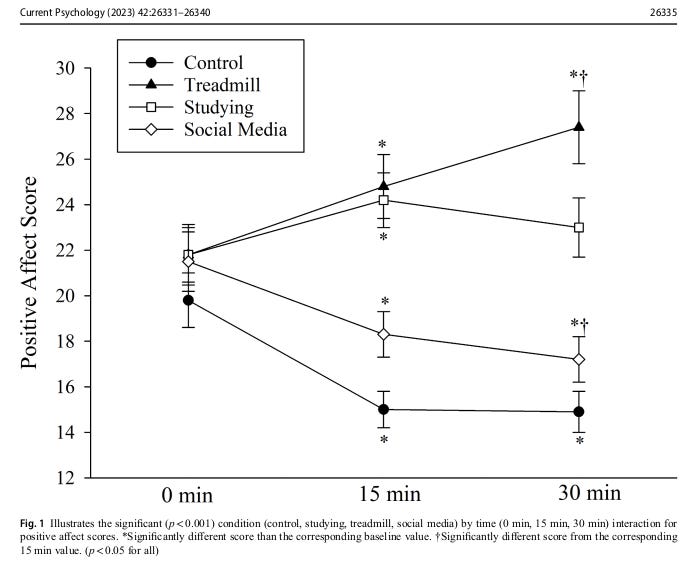

Results demonstrated that social media use for 30 min caused a significant decrease in positive affect.

So how is it possible that this experiment received the largest negative effect size among all the studies, indicating that social media exposure benefited participants greatly?

The only plausible answer is the linguistic misuse by Ferguson of the term ‘control’ as used by the authors when describing their experiment:

All participants completed the following 30-minute activity conditions: treadmill walking, self-selected schoolwork (i.e., studying), social media use, and a control condition where participants sat in a quiet room (i.e., do nothing).

The problem is that the ‘control condition’ was not intended to mean what control typically means in experiments, that is a test for a placebo (or nocebo) effect. This is clear from the following hypothesis stated by the authors:

Indeed the college students who were required to sit in class and literally do nothing for 30 minutes were understandably very irritated by this and it showed up on the post-test results:

So positive affect scores fell greatly after social media exposure, but they fell similarly after imposed inactivity. If Ferguson treated the control group as a true control condition, the deleterious impact of social media exposure on positive affect was ‘canceled’ by the similar impact of imposed inactivity.

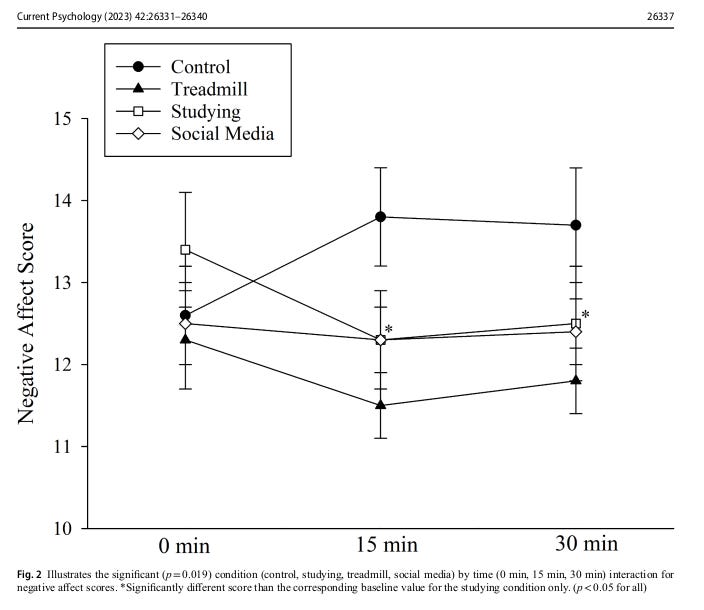

The deleterious impact of imposed inactivity was even more pronounced for negative affect (some very angry students):

Social media had little impact on negative affect, but if Ferguson subtracted the deleterious impact of ‘imposed inactivity’ from the no effect of social media exposure, the resulting effect size would indicate substantial (but illusory) benefits of social media exposure.

Needless to say, the authors never consider the ’true’ impact of social media exposure to be one where the imposed inactivity effects are subtracted as if they were a placebo (nocebo) effect. They clearly did not intend to use the term ‘control’ to imply such a misuse, otherwise they could not have concluded that social media use is bad for students and recommend in their Conclusion that students should be minimizing social media use.

After I emailed Ferguson with the Lepp 2022 effect size criticism above and asked if there will be a correction, Andrew Lepp of Kent University, whom I copied, replied to thank me “for the accurate description of our referenced study” and urged Ferguson to answer my inquiry. Ferguson replied solely to Lepp, stating: “I’m comfortable with my interpretation of your data as related to the specific questions of the meta”.

Thus out of the 27 studies examined by Ferguson, the one providing the supposedly strongest evidence against harmful impacts of social media (per Ferguson’s effect sizes) comes from an study whose authors concluded the opposite and dispute Ferguson’s effect size determination.

Study #2: Incorrect Effect Size for Collis 2022

Collis 2022 is an anomaly: out of the 10 social media reduction experiments that lasted over a week, it is the only one to which Ferguson assigned a substantially negative effect size (meaning social media is good for mental health).

The problem is that this appears to be due to an error made by Ferguson.

Nothing in the study suggests the d = -0.138 impact determined by Ferguson.

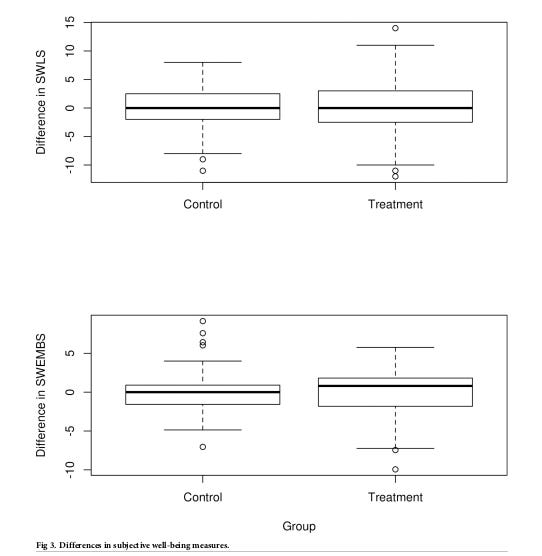

If anything, social media reduction seems to reveal a small benefit per Figure 3 of the paper:

Regression models in Table 6 provide correlations too minuscule (|r| <= 0.02) to explain d = -0.138.

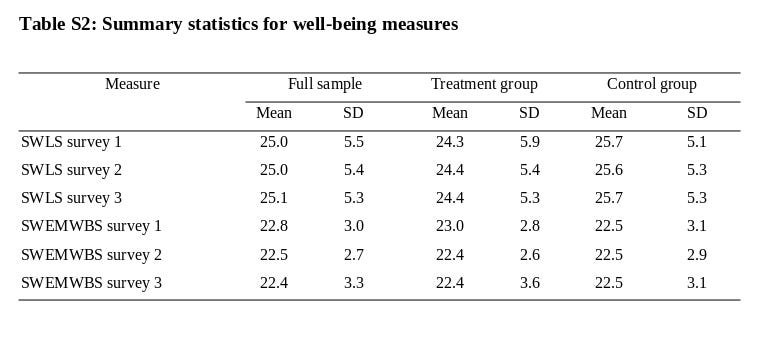

The authors do, however, include Table S2: Summary statistics for well-being measures:

Perhaps Ferguson calculated d by comparing Survey 1 with Survey 3, and there is indeed a substantial decline in SWEMWBS for the treatment group.

The problem is, however, that one needs to compare Survey 2 (pre-test) with Survey 3 (post-test).

Survey 1 was a ‘calibration’ survey that took place 3 months before the experiment started — to assign this effect to an experiment that began *after* the decline occurred is absurd.

In view of the above, it seems likely that the d = -0.138 for Collis 2022 is due to Ferguson’s misreading of Table S2.3

Study #3: Incorrect Effect Size for Brailovskaia 2022

The effect size for Brailovskaia 2022 on Ferguson’s list is d = 0.

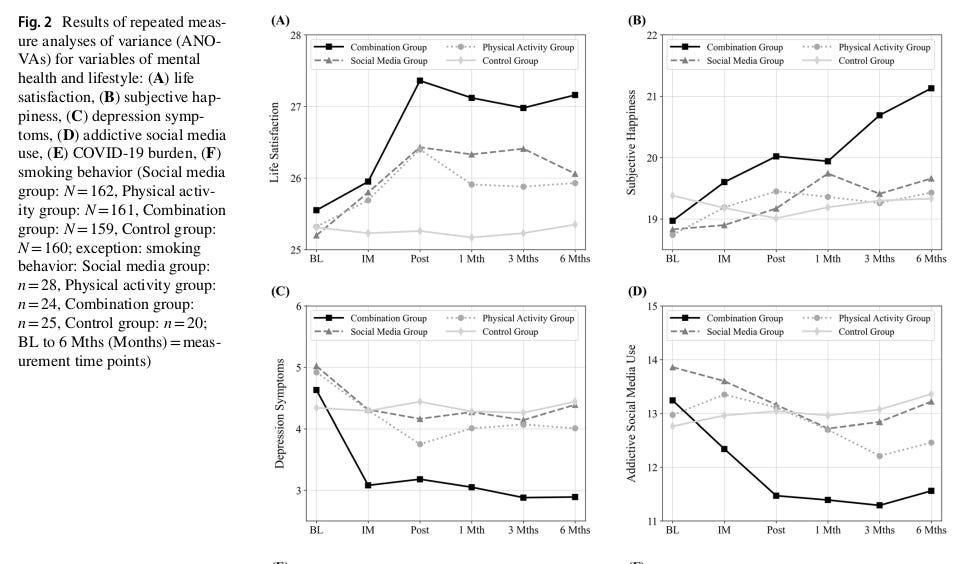

In reality, the authors report numerous benefits to well-being:

Results In the experimental groups, (addictive) SMU, depression symptoms, and COVID-19 burden decreased, while physical activity, life satisfaction, and subjective happiness increased.

There is no indication of any other well-being measures that could counter the ones mentioned by the authors (even smoking declined in the social media reduction group).

The benefits of social media reduction remained even 6 month after the experiment ended:

I’m at a loss as to how this could translate to a d = 0 result unless this effect size is a typo.4

Control Group Issues

Ferguson could have decided to either exclude Lepp 2022 for failing to have a reasonable control group, or to calculate the effect size simply as the difference between pre-treatment and post-treatment measures.

Both decisions would have been defensible in my view.

For Ferguson to exclude Lepp 2022 would mean, however, that he would have to also exclude two additional studies on his list: Gajdics 2021 (d = -0.364) and Przybylski 2021 (d = -0.152) — neither of these had a control group.

Inclusion and Exclusion of Studies

The result of the meta-analysis was also affected by two cases of indefensible inclusion and one case of indefensible exclusion.

Gajdics 2021 (d=-0.364) is not a true social media experiment because it is a one day no phone in high school experiment. The effects of nomophobia (NO MObile PHone PhoBIA) are no doubt much stronger than the impact of a mere social media ban (especially during school hours in high school!). In other words the effects are unlikely to be primarily about social media reduction but about phone ban and the resulting nomophobia.

Since Gajdics 2021 has the second strongest negative d in the meta-analysis, its proper exclusion would have had considerable impact.

In Deters 2013 (d = -0.207) the treatment is to simply increase frequency of updating Facebook status for a week, the result being a decline in feelings of loneliness. There is, however, no evidence whatsoever in the study that time spent on social media increased — and there are good reasons to suspect it did not, because communication with friends and family may increase sense of purpose and therefore decrease passive social media use takes up of the online most time of heavy users. Ferguson explicitly declared that studies must examine time spent on social media use and yet he included a study that clearly violates this requirement.

The exclusion of Mosquera 2019, on the other hand, appears indefensible: this is a massive study (1765 participants) that found significant decrease in depression after one week of Facebook abstention. The exclusion of this study is all the more puzzling given that it is listed in the Experimental Evidence section of Social Media and Mental Health: A Collaborative Review compiled by Haidt and Twenge and has been covered in the media (see College students who go off Facebook for a week consume less news and report being less depressed).

Impact on Meta-Analysis

To summarize the above problems in context, see the Table of studies that Ferfguson released together with the effect sizes he assigned:5

I marked studies that should not have been included (per Ferguson’s own rules) in red, and I marked the severely erroneous effect sizes also in red.

The average d in the table above is 0.084.

Correcting Lepp 2022 from d = -0.365 to a reasonable yet conservative d = 0.250 increases the average effect to d = 0.107.6

This illustrates how highly sensitiveness is the average to even a single of error in the determination of effect size.

Note that disqualifying Lepp 2022 would require also disqualifying Gajdics 2021 (d = -0.364) and Przybylski 2021 (d = -0.152) and so would increase the average even more (d = 0.122).

If also we assign reasonable yet conservative estimates of d = 0 to Collis 2022 and d = 0.1 to Brailovskaia 2022, the average jumps to d = 0.116.

If we furthermore removing the two inappropriate studies from the list, the average increases to 0.148.

Note that meta-analysis weights different effect sizes differently according to variance, and since Ferguson withheld this information (as well as the wright assigned by the software he used), precise reproduction of his analysis is impossible.

Ferguson’s result d = 0.088, however, is slightly higher than the plain average d = 0.084.

For this reason the above corrections would likely produce a meta-analytical result of roughly d = 0.15.

The confidence interval (−0.018, 0.197) would almost certainly exclude zero even if we only corrected Lepp 2022, because it would both shift it above and also contract it due to reduced heterogeneity of the effect sizes. Adding the other correction would no doubt produce a confidence interval well above zero.

Discussion

It is important to note that Ferguson misinterprets lack of statistical significance as evidence that there is no effect and advertises his study as having found that reducing social media time has NO impact on mental health.

Such misinterpretation seems unfortunately common in psychology, which in turn magnifies the adverse impact of statistical insignificance findings in influential papers — such as this review published by Ferguson in Psychology of Popular Media.

It is also important to keep in mind there are many additional flaws in Ferguson’s review — some yet to be discussed by me and some already identified in my initial response Fatally Flawed: Social Media Experiments Review by Christopher J. Ferguson).

Furthermore, Ferguson should provide his calculations of effect size (and standard error) that he assigned to each of the studies, so that these can be independently reviewed — otherwise it is practically impossible to reproduce his meta-analysis.

Conclusion

In view of the above problems, Ferguson’s meta-analysis is based on highly erroneous methodological determinations and severely biased in support of his conclusions.

Ferguson needs to correct the effect sizes for Collis 2022, Brailovskaia 2022, and Lepp 2022; Ferguson should also include include Mosquera 2019 and exclude Gajdics 2021 and Deters 2013.

Since such corrections will switch the result of Ferguson’s own meta-analysis from an insignificant to a significant average effect size, the premise of Ferguson’s main argument will disappear.

Even a cursory review of Ferguson’s methodology has therefore catastrophic consequences for the reliability of his analysis and invalidates his conclusion that experimental evidence undermines concerns over social media being harmful to mental health.

I do not think that Ferguson’s ‘meta-analysis’ of such a wide-ranging types of experiments, with a single ‘effect size’ assigned to disparate and arguably incommensurate aspects of well-being, often measured on incompatible scales, makes any scientific sense. Since a respectable psychological journal published the meta-analysis, however, it will legitimate the result for many psychologists and most of the public. This is why I point out the errors in the meta-analysis and how their correction invalidates the premises of Ferguson’s already flawed reasoning.

There is a second flaw in Ferguson’s reasoning, namely misinterpretation of Cohen’s effect size when applied to small ordinal scales common in well-being measures; a corrected meta-analysis also invalidates the premise of this flawed argument, since the upper bound of the confidence interval moves above the mistaken minimal effect size decreed by Ferguson.

I’ve sent an email inquiry to Ferguson but received no reply so far.

I’ve sent an email inquiry to Ferguson on May 10 but received no reply so far.

Citation entries are per Ferguson (Collins should be Collis).

Disqualifying Lepp 2022 would require also disqualifying Gajdics 2021 (d = -0.364) and Przybylski 2021 (d = -0.152) and so would have increased the average effect size even more (d = 0.12).